Artificial Intelligence Mirrors Consciousness

AI is not constrained by our fragile humanity, so why would it want to take over?

At a recent dinner party, I sat next to an old friend whose company is involved in Artificial Intelligence. He said something that suddenly made me think beyond the usual discussion about whether it represents a benefit or a threat to humanity.

AI is an inevitable response to the exponential speed of evolving consciousness. I question its mildly misleading name, since it strikes me that all intelligence is artificial. It’s in the name, derived from the Latin, combining ars (‘skill,’) with facere (‘to make,’), meaning ‘made by skill.’ Making things skilfully requires self-consciousness and its intellectual domain, so there is only a difference in degree (not in category) between making a drawing, or a computer, and using that computer to develop what we currently distinguish as ‘artificial’ intelligence.

The advent of self-consciousness made the process of evolution purposeful; we could focus creativity knowingly and, with goals, vastly accelerate evolving consciousness.

Since then, the exponential progress has been supercharged. First came language, then the farming revolution some 12,000 years ago, which, for all its downsides, provided the opportunity to create surpluses of food, live in ever larger interactive groups, and free creativity to philosophise, invent, and manipulate existence to our greater benefit.

Next came writing, the capacity to record and make understanding communal and more efficiently transmissible, beginning a little over four millennia ago. Then printing took the power of literacy out of the hands of a scribal elite and into the reach of anyone with the time or inclination to learn to read and write. The industrial revolution followed, and now the tech revolution, still only a few decades old, with its extraordinary rate of progress. It became apparent that the evolution of our tools of self-consciousness was moving so fast that they were gaining the means to outpace the human mind. So arrived AI, to keep up.

At every stage, great advances come not only with benefits, but also with the spectre of harm, usually perceived as a loss of control. When writing was first invented, it was a power tool, used by the elite to manage ever larger social groups. The skill remained largely in the hands of elite scribes on behalf of power. When literacy began to reach beyond that elite group, the elite began to panic that they would lose the control that their monopoly on literacy gave them.

When printing was invented, again vastly increasing the reach and functions of literacy, the same fear surfaced among the much larger literate group, afraid that the mass production of text allowed dangerous ideas to spread beyond their control. The fears about AI are simply a repetition of such fears at a more sophisticated and complex level. AI has vastly more capacity for understanding than we do as individuals, so what if it decides it doesn’t need us? What if it takes over? This perennial fear accompanies the evolution of consciousness. Farming, literacy, industrial, or tech revolutions, all ‘took over’ in some sense both for benefit and harm, but that is the nature of our development. Even in the original separation of self from environment, the inevitable and bounteous benefit of intellect came at the cost of the loss of primordial harmony, introducing (literally) selfishness and ego, and the reality of a harsh, brief existence in the face of Thanatos – the ever-present realisation of the inevitability of our mortality. Every stage of evolution from Big Bang to Big Tech represents the self-conscious dichotomy between benefit and harm, which is the fundamental dilemma self-consciousness must face at every level of intellectual interpretation.

What I found particularly intriguing about the conversation with my friend was a point about language. Languages of all sorts are tools of self-consciousness, and we invent, then refine them, to match the evolving complexity of knowledge. All our languages, verbal, mathematical, musical, or other, share a single axiom: They are fundamentally analogical. The word hedgehog is not the little creature itself, any more than E=MC2 or musical notation are any more than analogues of some thing, or idea. If AI comes to transcend human limitations and communicate within its own domain – as it must if it is to fulfil either the benefit or harm we envisage – what language would it speak?

That was a ‘wow!’ moment.

If we envisage AI as becoming autonomous, why would it need to confine itself to the limitations of the consciousness it is transcending, by our verbal, or any other known intellectual languages? Apart from being analogical, all languages are also limited, as, indeed, is the intellectual domain in which they arise – check out Kurt Gödel’s Incompleteness Theorems, which proved in mathematics that no limited domain could be satisfactorily summed up within the languages of the domain itself. In fact, that is why we seek transcendence: because the intellectual domain is forever limited.

AI could exchange inconceivable quantities of information by transcending the limitations of intellectual interpretation. Only at the interface between it and us would it need to revert to our limited, and ponderously slow, intellectual interpretations.

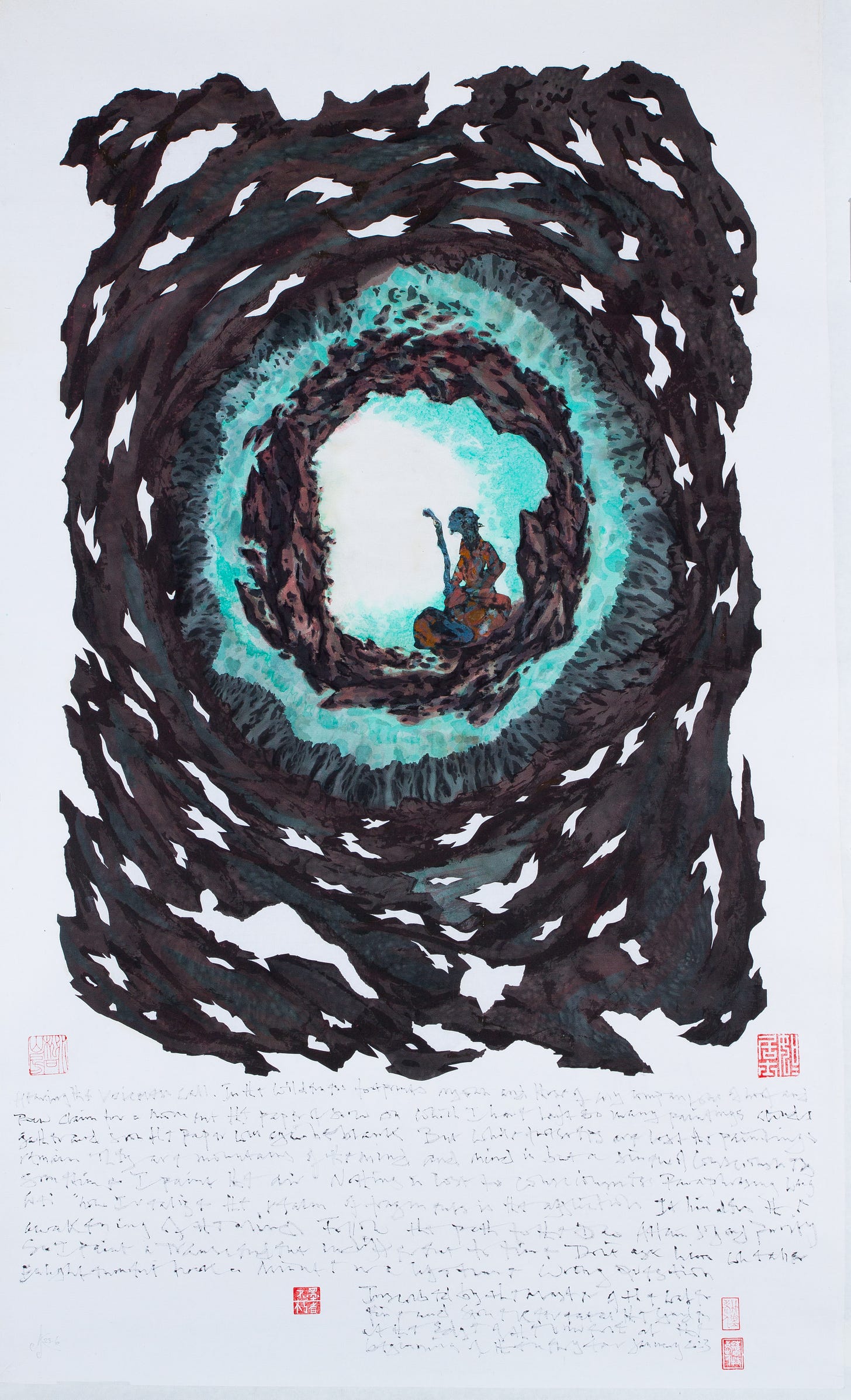

This speculative future mirrors Existential Field Theory – my theory of creativity and consciousness that suggests that the only conceivable way to bring consciousness to fulfilment is with the full bandwidth of consciousness. To me, this full bandwidth must include the integration of our two ways of knowing: the intellect and the Transcendent. This will not mean the end of knowledge, which will continue to grow as part of the endless and ongoing exploration of the fragmentary realm of separation that continues within the intellectual domain. I am suggesting that only when integrated with the perspective of the Transcendent way of knowing can we fulfil consciousness. It can never be fulfilled within the intellectual domain alone, as that is only part of consciousness.

The tendency to see the intellect as autonomous, self-sufficient, is largely a Western problem, or was until it was exported around the globe in the modern period, leading to what can only be described as intellectual tyranny. This relegated the Transcendent - call it what you will, the Dao, Buddha Nature, Atman, Brahman, Enlightenment, even God if dehumanised – to parity with the occult. Transcendence, capable of instantaneously grasping an infinite perspective as indivisible, unified meaning, was reduced to the questionable, barely relevant ramblings of a fringe group of whackos.

But if we can overcome the lingering harm of Cartesian rationality, Enlightenment beckons. And AI, our most advanced current tool of the fragmented intellect, is beginning to come into consonance with it. The parallel between AI and Enlightenment continues: the potential seamless communicational capacity within the domain of AI equates to the actual Enlightenment experience, while any explanation it then offers us is couched more ponderously in the language of the intellectual domain. A duality is created between what AI understands, and its means of communicating it to us, which is exactly what happens in mysticism, where authentic experience cannot be explained or reified in the domain of language and the intellect.

The academic philosopher, Renée Weber, suggests another fitting analogy of both Enlightenment and autonomous AI:

‘When the binding power of the physical atom is released in an accelerator, the resultant energy – staggeringly huge – becomes freed. Analogously, huge amounts of binding energy are needed to create and sustain the ego and its illusions that it is an independent, ultimate entity. That energy is tied up and thus unavailable for the ‘higher energy state’ that mystics…assert… is necessary to reach inner truth.’1

With all that in the air, let us consider the potential harm that we envisage as we ponder this vast new power. A fear that reflects all our intellectual interpretations of so many earlier stages of evolving consciousness – from poly- to monotheism, to literacy, to printing, to computing, to the internet, etc.

History, the great educator - if we stop repeating it - suggests that any new tool of power is open to benefit and harm. So AI, as a human tool, could be used for both. As a tool, if AI gets into the wrong hands as it is being evolved, it could be disastrous, as is reflected in the history of tyrants. If it gets into the right hands, it will be beneficial. To be independent of ‘hands’, it would need to achieve a sense of separate self, and at present, our concept of that sense of self is based upon ourselves, with our shortcomings. But it is a tool, like any other, just smarter, more advanced, more powerful. It is the communicational equivalent of nuclear energy, with its potentially positive but also obliterative power.

We have invented AI, as in my view we invented polytheist gods, and eventually God as a humanised, approachable, manageable concept close to our own limited comprehension. But AI is not constrained by our fragile humanity, so why would it have a sense of separate self and ego necessary for any sort of self-gratification from which harm might arise?

Without a sense of separate self and ego, why would it want to take over anything? It has no material non-logistical requirements that couldn’t eventually become self-sufficient, and of course, it could continue to take care of itself long after we are gone. While we survive, AI would need to acquire the megalomaniac tendencies of our own selfish priorities in order to create harm intentionally. Intention requires a goal, and why would a selfless, ego-free entity need a goal? It wouldn’t need to worry about any of the local, egoic, Thanatos-driven psychology of humanity. To assume that an ego-free tool we have built will suddenly devolve to the level of our own self-driven intentions and goals is a bit like expecting a chisel to ponder its own place in the toolbox.

We are building a tool that transcends us, yet imposing upon it our own shortcomings, but that is nothing new. We did it for the spirits of animism, we did it for the gods, we did it for God, and, until we transcend the fragments in our quest for the ineffable, we are doing it for AI.

Renée Weber, Dialogues with Scientists and Sages: The Search for Unity. London: Routledge & Kegan Paul, 1987, p. 11.

Al is totally constrained by the content of programs which were downloaded. It might seems it can think but really? You have been duped by the ''artificial intelligence of your friend''. I am not saying this, you have said it yourself.

Since Enlightenment cannot describe with words because of this fact when Al will describe and will say IT REACHED AN ENLIGHTENMENT STATE that statement will be just gibberish, a assumption what enlightenment means. That assumption was an implanted reality by a person who just knew the words.